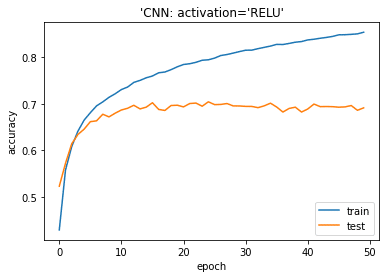

These are the dance moves of the most common activation functions in deep learning. My friend and colleague Giray inspires me to produce this post. Now, you can design your own activation function or consume any newly introduced activation function just similar to the following picture. Picking the most convenient activation function is the state-of-the-art for scientists just like structure (number of hidden layers, number of nodes in the hidden layers) and learning parameters (learning rate, epoch or learning rate). So, we’ve mentioned how to include a new activation function for learning process in Keras / TensorFlow pair. If you design swish function without keras.backend then fitting would fail. This comes from importing keras backend module. The framework knows how to apply differentiation for backpropagation. We just define the activation function but we do offer its derivative. Remember that we will use this activation function in feed forward step whereas we need to use its derivative in the backpropagation. Model.add(Dense(num_classes, activation='softmax')) Model.add(Dense(512, activation = swish)) Model.add(Conv2D(64,(3, 3), activation = swish)) # apply 64 filters sized of (3x3) on 2nd convolution layer Model.add(Conv2D(32, (3, 3) #32 is number of filters and (3, 3) is the size of the filter. Besides, I include this in a convolutional neural networks model. In this case, I’ll consume swish which is x times sigmoid. So, this post will guide you to consume a custom activation function out of the Keras and Tensorflow such as Swish or E-Swish.Īll you need is to create your custom activation function. This might appear in the following patch but you may need to use an another activation function before related patch pushed. For example, you cannot use Swish based activation functions in Keras today. Herein, advanced frameworks cannot catch innovations. Then, it is shown that extended version of Swish named E-Swish overperforms many other activation functions including both ReLU and Swish. In 2017, Google researchers discovered that extended version of sigmoid function named Swish overperforms than ReLU. For example, second AI winter is over when vanishing gradient problem discovered and ReLU activation function introduced. Such an extent that number of research papers published about machine learning is growing faster than Moore’s law. Note: Bans will not be reversed if the post/comment in question has been deleted from your history.Almost every day a new innovation is announced in ML field. You may appeal this initial ban by messaging the moderators and agreeing not to break the rules again. Note that moderators will use their own discretion to remove any post that they believe is low-quality or not considered a LPT.īans are given out immediately and serve as a warning. Activation Function ReLU, Swish and Mish for Facial Mask Detection Using Convolutional Neural Network Rupshali Dasgupta, Yuvraj Sinha Chowdhury, and Sarita Nanda 1 Introduction In the eld of image processing and computer vision, face detection has been a compelling nodus. Posts or comments that troll and/or do not substantially contribute to the discussion may be removed.Do not post tips that are advertisements or recommendations of products or services.Do not post tips in reaction to other posts. We call the new activation E-swish beta x sigmoid(x).Posts concerning the following are not allowed: religion, politics, relationships, law and legislation, parenting, driving, medicine or hygiene (including mental health).

Do not post tips that are based on spurious, unsubstantiated, or anecdotal claims.Do not post tips that could be considered common sense, common courtesy, unethical, or illegal.The tip and the problem it solves must be explained thoroughly. Posts must begin with "LPT" or "LPT Request” and be flaired.No rude, offensive, racist, homophobic, sexist, aggressive or hateful posts/comments."No snowflake in an avalanche ever feels responsible." Keep in mind that an aphorism is not a LPT.Īn aphorism is a a short clever saying that is intended to express a general truth or a concise statement of a principle. “A marriage proposal should not come as a big surprise, despite what you may have seen in the movies.” “Always be prepared to leave your employer because they are prepared to leave you.” Advice is offering someone guidance or offering someone a recommendation. Keep in mind that giving someone advice is not the same as giving someone a LPT. A Life Pro Tip (or an LPT) is a specific action with definitive results that improves life for you and those around you in a specific and significant way.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed